A

ai.nocturnus/logic-server

Agent reasoning, memory, and token-optimized context for AI applications.

UnknownNot tested

9FNo CVEsunknown

Quick Install

{

"mcpServers": {

"ai-nocturnus-logic-server": {

"command": "<see-readme>",

"args": []

}

}

}No install config available. Check the server's README for setup instructions.

Are you the author?

Add this badge to your README to show your security score and help users find safe servers.

Embed in your READMEAbout badges →

[](https://mcppedia.org/s/ai-nocturnus-logic-server)

Should you use this server?

Agent reasoning, memory, and token-optimized context for AI applications.

✓

Is it safe?

No package registry to scan.

No authentication — any process on your machine can connect.

License not specified.

~

Is it maintained?

Commit history unknown.

i

Will it work with my client?

Transport: stdio. Works with Claude Desktop, Cursor, Claude Code, and most MCP clients.

README

NocturnusAI

The context engineering engine for AI agents: send only what changed.

Before / After

# ❌ Without NocturnusAI — replay everything, every turn

messages = system_prompt + full_history + tool_outputs # ~1,259 tokens/turn

response = llm(messages) # $13,600/mo at scale

# ✅ With NocturnusAI — send only what changed

ctx = nocturnus.process_turns(raw_turns) # extract → infer → delta

messages = system_prompt + ctx.briefing_delta # ~221 tokens/turn

response = llm(messages) # $2,400/mo. Same accuracy.

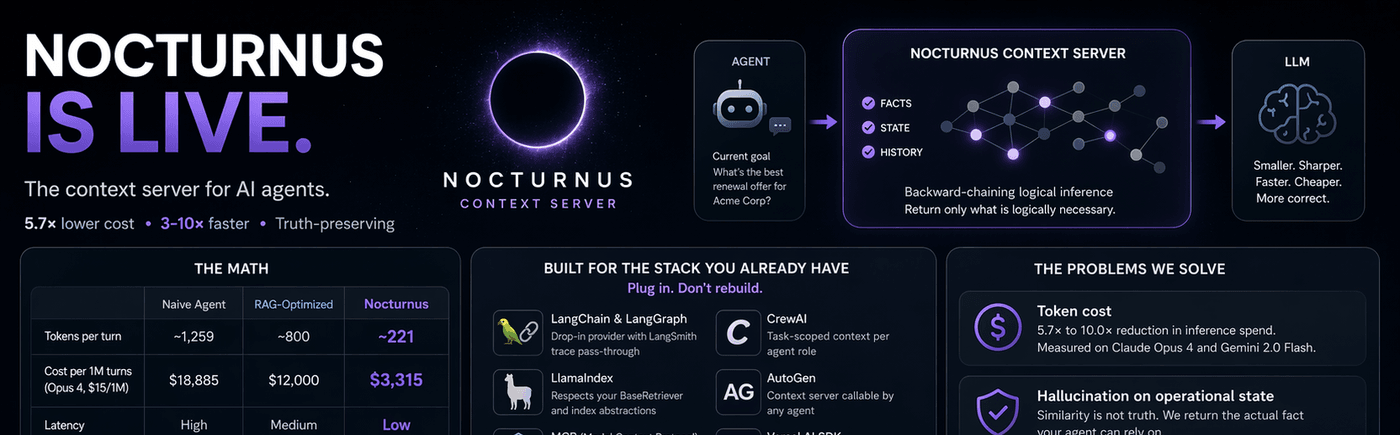

The Numbers

Measured on live APIs. 15-turn product support conversation. Real usage.input_tokens counts. Run it yourself.

| Naive replay | RAG-optimized | NocturnusAI | |

|---|---|---|---|

| Tokens per turn | ~1,259 | ~800 | ~221 |

| Cost per month (1K req/hr, Opus 4, $15/1M) | $13,600 | $12,000 | $2,400 |

| Latency | high | medium | low |

| Truth-preserving | no | no | yes |

Claude Opus 4: 5.7× reduction. Gemini 2.0 Flash: 10.0×. Full calculations.

Install

pip install nocturnusai # Python

npm install nocturnusai-sdk # TypeScript

docker run -p 9300:9300 ghcr.io/auctalis/nocturnusai:latest # Docker

Or use the setup wizard:

curl -fsSL https://raw.githubusercontent.com/Auctalis/nocturnusai/main/install.sh | bash

Why Developers Star This Repo

- Reproducible token reduction — benchmark in the repo, methodology published, run it against your own workload

- Deterministic inference — same query, same result, every time. No embedding drift, no cosine similarity lottery

- Truth maintenance — retract a fact, all derived conclusions auto-retract. No stale context, no hallucination on operational state

- Plugs into existing stacks — LangChain, LlamaIndex, CrewAI, AutoGen, MCP, Vercel AI SDK, OpenAI Agents SDK, Mastra

- Benchmarkable against naive replay — numbers derived, not invented. Every claim traces to a notebook cell

Framework Quickstarts

| Framework | Integration | Link |

|---|---|---|

| LangChain / LangGraph | Drop-in NocturnusContextProvider, LangSmith trace pass-through | Docs |

| CrewAI | Task-scoped context per agent role | Docs |

| AutoGen | Context server callable by any agent | Docs |

| MCP | Spec-compliant server for Claude Desktop, Cursor, Continue | Config |

| OpenAI Agents SDK | Context middleware, no tool modifications | Docs |

| Vercel AI SDK | Edg |

Test This Server

No automated test available for this server. Check the GitHub README for setup instructions.

Help improve this page

This server is missing a description. Tools and install config are also missing.If you've used it, help the community.

Add informationScore Breakdown

Security

3/30No known vulnerabilities.

○

Known CVEs — No package registry to scan

○

Tool safety — No tools to analyze

○

Tool poisoning — No tools to analyze

○

Injection vectors — No tools to analyze

✓

Tool stability — Tool definitions stable

○

Dependency health — No package to analyze

✗

License — No license specified

○

Authentication — No authentication required

○

Repository — active repo

CVEs checked daily via OSV.dev. Score algorithm is open source.

Reviews

Have you used this server?

Share your experience — it helps other developers decide.

How easy was setup?Did it work reliably?How was the documentation?

Sign in to write a review.

Frequently Asked Questions

- Is ai.nocturnus/logic-server safe to use?

- ai.nocturnus/logic-server has no known CVEs as of the latest MCPpedia security scan. It does not require authentication, so any local process can connect — keep this in mind in shared environments.

- How do I install ai.nocturnus/logic-server?

- ai.nocturnus/logic-server can be installed by cloning its GitHub repository and following the setup instructions in the README.

- What AI clients work with ai.nocturnus/logic-server?

- ai.nocturnus/logic-server is compatible with Claude Desktop, Cursor, Claude Code, and most MCP clients that support stdio transport. It uses stdio transport.

Similar servers

View all →Sequential Thinking MCP Server

OfficialNo CVEs98A

Dynamic problem-solving through sequential thought chains

1 toolsstdio84.0k112.2k/wk2d ago

XcodeBuildMCP

OfficialNo CVEs97A

A Model Context Protocol (MCP) server and CLI that provides tools for agent use when working on iOS and macOS projects.

2 toolsremote5.2k46.6k/wk1d ago

Python Sdk

OfficialNo CVEs95A

The official Python SDK for Model Context Protocol servers and clients

1 toolsremote22.7k166.4k/wk13h ago

Gemini Cli

No CVEs95A

An open-source AI agent that brings the power of Gemini directly into your terminal.

8 toolsremote101.5k891.1k/wk5h ago

MCP Security Weekly

Get CVE alerts and security updates for ai.nocturnus/logic-server and similar servers.

Discussion

Start a conversation

Ask a question, share a tip, or report an issue.

Has anyone used this with Cursor?How do you handle auth?Any alternatives?

Sign in to join the discussion.