I

io.github.runyourempire/4da-mcp-server

Scan deps for CVEs via MCP. Auto-detects stack, queries OSV.dev. Zero config, privacy-first.

0 tools GitHub

No known CVEs

No license

Maintenance unknown

No commit data

Untested

Transport: stdio

0 tools

Grade F

Step 1

Install in your client

Config is the same across clients — only the file and path differ.

CD

Untested on Claude Desktopstdio · Node 18+

Paste into ~/Library/Application Support/Claude/claude_desktop_config.json

{

"mcpServers": {

"io-github-runyourempire-4da-mcp-server": {

"command": "<see-readme>",

"args": []

}

}

}Are you the author?

Add this badge to your README to show your security score and help users find safe servers.

Embed in your READMEAbout badges →

[](https://mcppedia.org/s/io-github-runyourempire-4da-mcp-server)

Read me

What io.github.runyourempire/4da-mcp-server does

Scan deps for CVEs via MCP. Auto-detects stack, queries OSV.dev. Zero config, privacy-first.

Test This Server

No automated test available for this server. Check the GitHub README for setup instructions.

Loading README…

Scored, not listed

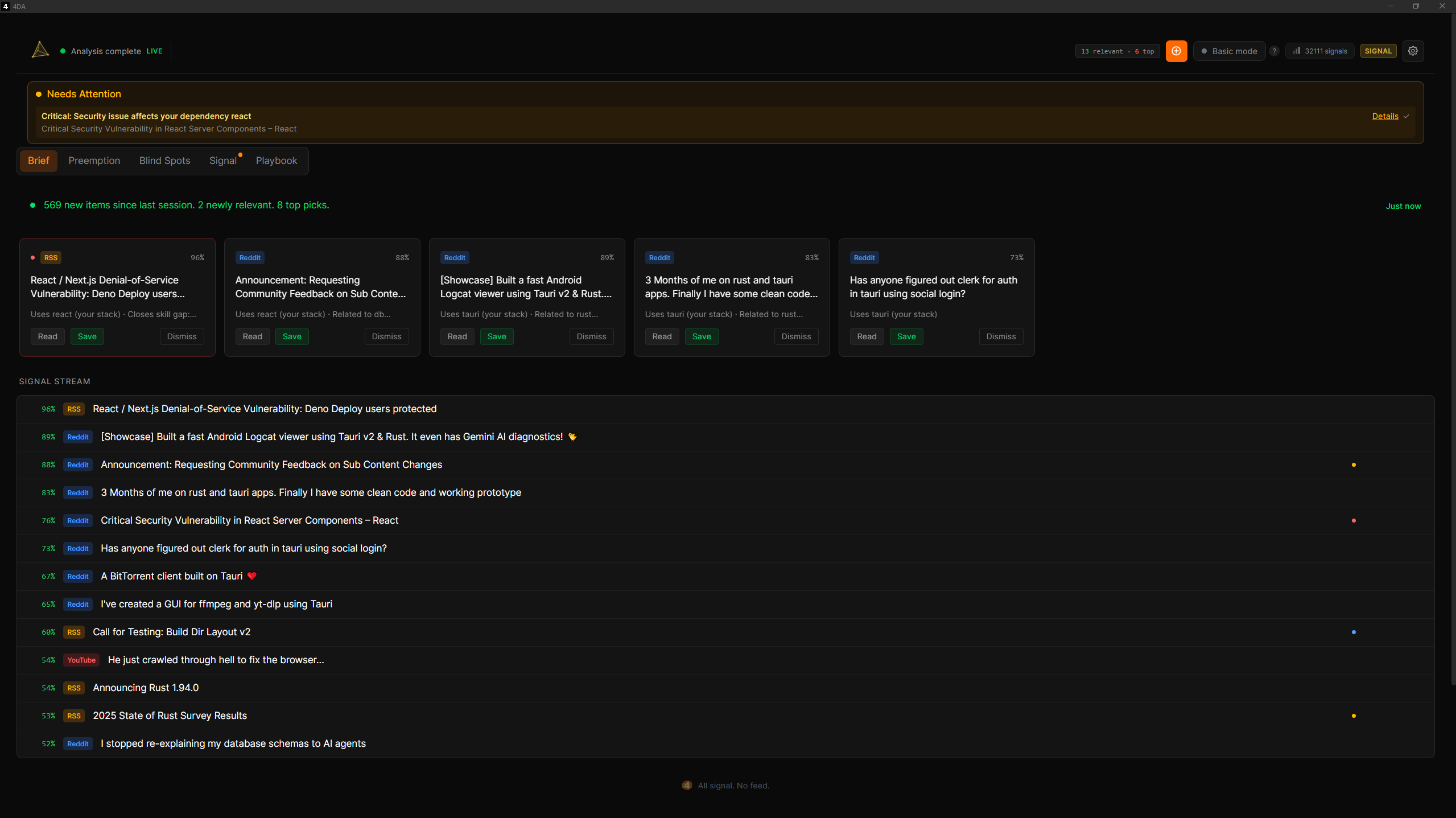

Why this score

Five weighted categories — click any category to see the underlying evidence.

Score breakdown

15/100across 5 weighted dimensions

0255075100

9

−85

Security

Maintenance

Efficiency

Documentation

Compatibility

Categoriesclick a row to see evidence

Security

OSV.devNo known CVEs.

No package registry to scan.

Help improve this page

This server is missing a description. Tools and install config are also missing.If you've used it, help the community.

Add informationCommunity

Reviews

Be the first to review

Have you used this server?

Share your experience — it helps other developers decide.

How easy was setup?Did it work reliably?How was the documentation?

Sign in to write a review.

Frequently Asked Questions

- Is io.github.runyourempire/4da-mcp-server safe to use?

- io.github.runyourempire/4da-mcp-server has no known CVEs as of the latest MCPpedia security scan. It does not require authentication, so any local process can connect — keep this in mind in shared environments.

- How do I install io.github.runyourempire/4da-mcp-server?

- io.github.runyourempire/4da-mcp-server can be installed by cloning its GitHub repository and following the setup instructions in the README.

- What AI clients work with io.github.runyourempire/4da-mcp-server?

- io.github.runyourempire/4da-mcp-server is compatible with Claude Desktop, Cursor, Claude Code, and most MCP clients that support stdio transport. It uses stdio transport.

Related

Similar servers

Others in other

Persistent memory using a knowledge graph

84.3k 5

Privacy-first. MCP is the protocol for tool access. We're the virtualization layer for context.

8.8k 6

Make HTTP requests and fetch web content

84.3k 1

Read, write, and manage files on the local filesystem

84.3k 7

MCP Security Weekly

Get CVE alerts and security updates for io.github.runyourempire/4da-mcp-server and similar servers.

Community

Discussion

Start a conversation

Ask a question, share a tip, or report an issue.

Has anyone used this with Cursor?How do you handle auth?Any alternatives?

Sign in to join the discussion.